티스토리 뷰

Preview

CNN 아키텍쳐를 살펴보고, 각각 성능을 높이기 위해 어떤 방식을 활용하였는지 알아보자.

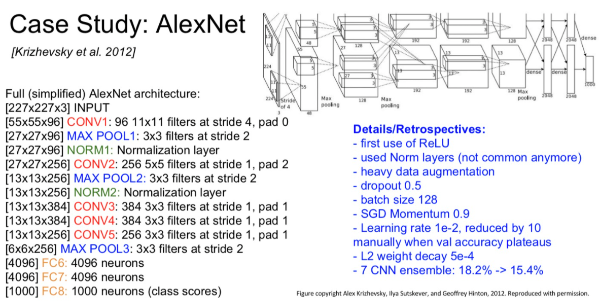

AlexNet

- 최초의 Large scale CNN

- ReLU 처음으로 사용

- GPU 2대를 이용하여 빠른 연산 병렬구조

Layer의 수 : 8개

Color image가 input

Data augmentation 사용 : 데이터셋 이미지를 좌우반전 or 잘라서 or RGB값 조정하여 데이터의 수를 늘림

Norm Layer 사용 : batch normalization, 지금은 안쓰임.

필터 크기 : 11*11, stride=4 / 3*3 pooling, stride=2

dropout: 0.5

batch size: 128

SGD Momentum : 0.9

Learning rate : 1e-2

L2 weight decay : 5e-4

7 CNN ensemble : 18.2% -> 15.4%

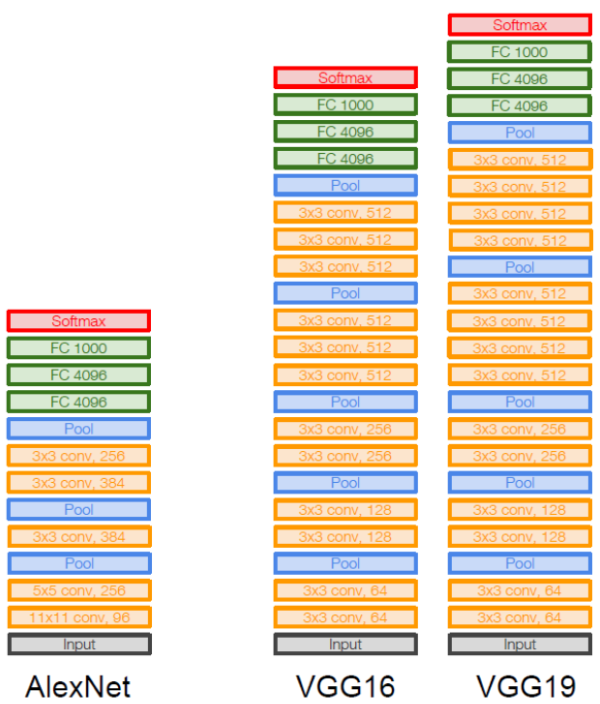

* VGG, GoogleNet 부터는 layer가 더 깊게 쌓이기 시작함.

VGG

- 네트워크를 16-19 층까지 쌓아 성능을 높임

Conv, max-pooling 반복되는 구조

Conv: 3*3 filter, stride=1

Max-pool: 2*2, stride=2

* 이전에는 주로 5*5의 필터를 사용한 반면, 3*3의 작은 필터로 파라미터 수를 줄이고 층을 깊게 쌓아서 성능을 향상하였다.

* Layer가 깊어지면서, 다수의 activation func을 통과할 수 있으므로 더 많은 non-linearity를 줄 수 있게 된다.

* padding을 통해 network가 깊어져도 이미지 사이즈를 유지할 수 있다.

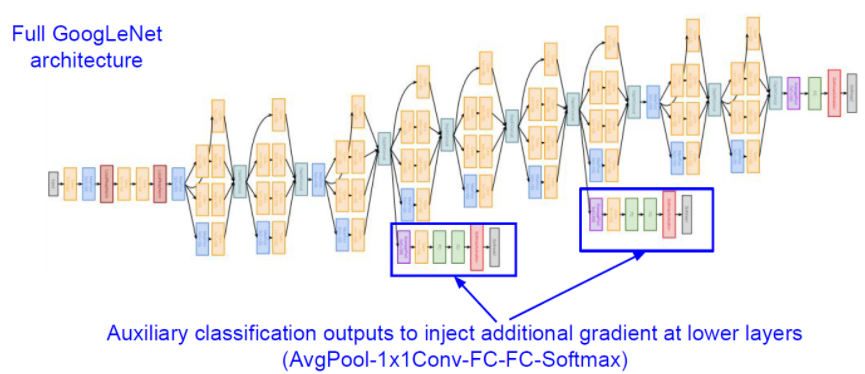

GoogleNet

- 22 layer

- "Inception" module

- FC Layer X

* Inception module: 같은 입력을 받는 여러 개의 필터들이 병렬적으로 존재 -> 결과를 합침

* 계산량 문제 발생 -> 1*1 Conv layer사용 -> input depth가 줄어드는 효과 "Bottelneck layer"

* 중간 중간 gradient를 넣어 back propagation이 진행되어 gradient vanishing 문제가 발생하지 않도록 함.

Resnet

- 층이 매우 많은 것이 특징! -> 152 layers

- Residual connection으로 degration (성능저하) 해결

* Degradatopm: 네트워크의 구조가 깊으면 깊을수록 어느 순간 그 모델은 학습이 잘 안된다는 것.

* Skip Connection으로 degradation 문제를 해결함

* 기존 layer들은 target data H(x)를 얻는 것이 목적이었으나, residual block은 output에 input data는 x를 더해서 F(x) + x를 최소화하는 것을 목표로 함.

* F(x)를 최소화 한다는 것은 H(x)-x를 0과 가깝게 만들어준다는 뜻, 이때 H(x)-x를 residual이라고 함.(잔차)

* batch normalization

SENet

- Squeeze and excitation networks

- 기존 CNN -> 중요한 정보에 집중할 수 있는 attention기능이 없었음.

- attentioni 모듈 : squeeze + excitation 추가하자!

* Squeeze : Global information embedding

- 중요 정보 추출 개념 (Gloval Average Pooling 사용) / channel descriptor로 압축

* Excitation : 중요도 계산하기 / 채널 간 의존성 계산 / FC -> ReLU -> FC -> sigmoid -> 0-1사이로 Attention Score나타냄.

'AI > Classification' 카테고리의 다른 글

| [논문리뷰] Swin Transformer: Hierarchical Vision Transformer using Shifted Windows (0) | 2025.02.12 |

|---|---|

| [논문리뷰] ViT: Vision Transformer (0) | 2025.02.12 |

- Total

- Today

- Yesterday

- 2d-gs

- AIRUSH2023

- AIRUSH

- 드림부스

- gan

- lgaimers

- Aimers

- gs논문

- 테크서밋

- SKTECHSUMMIT

- SQL

- 프로그래머스

- 파이썬

- C언어

- MYSQL

- 코랩에러

- 코딩공부

- Paper review

- 논문

- dreambooth

- 스테이블디퓨전

- CLOVAX

- 파이썬코테

- 코테준비

- 3d-gs

- 논문읽기

- 컴퓨터비전

- AI컨퍼런스

- 논문리뷰

- Gaussian Splatting

| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 | 13 | 14 |

| 15 | 16 | 17 | 18 | 19 | 20 | 21 |

| 22 | 23 | 24 | 25 | 26 | 27 | 28 |

| 29 | 30 | 31 |